Company

IR

Video Stabilization “MovieSolid®”

“MovieSolid”, video stabilization technology, has been a major product since Morpho's founding, and is currently offered with smartphones as the primary target area.

The person in charge of the development of "MovieSolid" talked about its development and product features, as well as the possibilities of imaging AI in a wide range of areas.

Hello, this is Dr. Masaki Satoh, a senior research engineer of the CTO office. I’m in charge of research and development of video products including MovieSolid, a video image stabilizer, and Morpho Frame Interpolator, a frame rate conversion technology.

In graduate school, I was doing research in the field of cosmology, which is completely unrelated to my current job. It's not unusual these days for a physics or math Ph.D. student to find a job in a tech company and get involved in imaging technology. However, when I joined Morpho in 2011, which was right before the deep learning revolution, I think it was more rare compared to now.

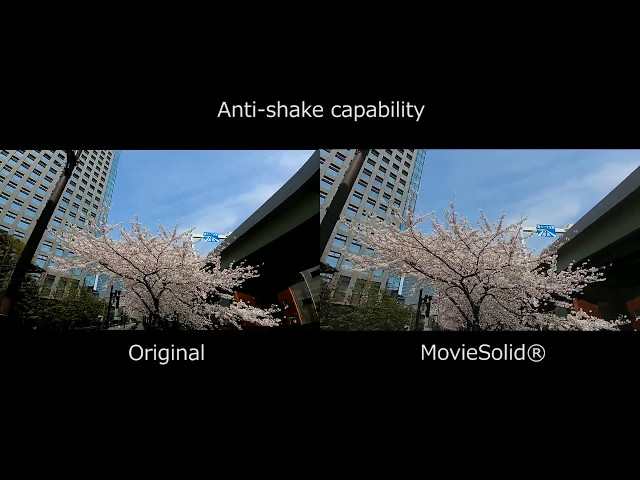

Take a look at the following video. The video on the left was shot by a smartphone. Applying MovieSolid to it, we got the one on the right. As you can see, MovieSolid is a technology to suppress camera shake; in other words, “stabilize” a video.

MovieSolid cancels out camera shake electronically by cropping an area of an image. This technique is called electronic image stabilization. There is also a technique called optical image stabilization which mechanically moves the lens to compensate for camera shake. The biggest advantage of electronic image stabilization over optical stabilization is that it requires no special hardware, so it can be installed in inexpensive products. The disadvantage is that the image must always be cropped, which narrows the effective angle of view.

There are several other companies that offer these electronic video stabilization products. The advantage of MovieSolid over those competitors is that it can be used in a variety of situations. It works well with built-in optical stabilizers. It supports strongly distorted camera lenses and multi-camera devices. It works properly even if some of the camera parameters, such as the zoom ratio and/or the camera module itself, are changed on-the-fly.

The product itself had existed before I joined Morpho, and I took it over about a year after I joined. However, there was no predecessor, only code, and the code was far from good. So I erased it all and recreated it from scratch, so I think I can say that I created the product myself. Since then, I've been doing the basic development on my own. As an assigned engineer of MovieSolid, I developed some related video products such as Morpho Video Denoiser, a noise reduction technology for videos. Then, the sales team began to ask me to develop more and more new video products. So, before I knew it, I was in charge of the entire video technology at Morpho.

Here are some interesting points about developing video products, including MovieSolid. If you want to do video processing in real time, say at a standard 30 FPS frame rate, all the processing for a single frame must be completed in less than 1/30 second. No matter how clever and brilliant an algorithm is, if it takes more than 1/30 second to process, no one will pay attention. In addition, computing resources are limited for mobile devices. When you try to make a good product with limited time and resources, inspiration is the key. For me, it's very exciting to think, "How can I get a good result under such tough conditions?” It’s like solving a puzzle.

MovieSolid is more of a “traditional” vision technology rather than a cutting edge algorithm, and I like that. However, the environment surrounding computer vision is changing very rapidly, and I think it’s necessary to incorporate machine learning technologies to MovieSolid. Of course, it's still nonsense to leave all stabilization processes to machine learning, but there must be a practical way to use it. I'd like to explore this possibility in the future.

Off topic - I think most physicists don't study physics because they enjoy making models and doing calculations, but primarily because they want to know the laws of the universe or to explain certain phenomena. So, in this case, the main interest is the purpose and not the means.

This is not only true for physicists. I think that some people are working because they are interested in the purpose. On the other hand, I think there are other people who are not interested in the purpose itself, but do it because they enjoy computation and development as means. I'm the latter type. I probably shouldn't say this, but I don't have much to say about technology in general or about the possibilities of imaging AI. Needless to say, it's important to talk about ideals and have goals, but I think it’s also important to accept people who don’t have admirable and grand goals, like me. I'd be happy if someone becomes interested in Morpho's culture by thinking, "There are people who think like this.”

However, there are aspects of machine learning that I have to think about. Personally, I've said that I'm more interested in the means itself than the purpose, but therein lies the problem. Nowadays, I feel that this “means” part is being rapidly replaced by machine learning. I think this is purely a good thing for those who are interested in the purpose, but it is a threat for those who are interested in the means. I've been thinking a lot lately about how I can make a contribution to the field of video image processing in a world where machine learning is rapidly replacing good old algorithms of computer vision.

MovieSolid®

Video Stabilization

“MovieSolid” is electronic image stabilization for moving pictures, utilizing IMU sensor data or motion detection technology “SOFTGYRO®“.